For the past 2 weeks I’ve been away from home, so I’ve been doing some reading (instead of coding). I was reading How to Create a Mind by Ray Kurzweil and it very clearly outlined something which I think is very important + gave me a few ideas for bench marks to replicate.

Brains and computers think in very different ways. To summarize the next few minutes of reading: computers can do logical computations effectively, whereas brains can not, and brains can do pattern recognition effectively, whereas computers can not.

Here is a thought experiment.

I’m going to give you a sequence of 10 numbers:

Now try to recite those numbers out loud without writing them down. Can you recite them backwards? How about skipping every other number? Sum them up? You probably can’t.

If I handed this sequence of numbers to a computer, it could easily do all of the above tasks. In fact it could do a lot more! Like how about sorting them from biggest to smallest? Or reciting them backwards while skipping every 2 digits. A computer could do all these things easily, because they are logic operations.

Your mind, struggles to do such a thing.

What your mind doesn’t struggle to do, is find, recognize, and learn patterns. For example, classify each of these images as either a dog or a cupcake:

That wasn’t too hard right? How about instead to classify Sheepdog or mop?

Maybe that one was a bit harder, but still very much doable! A logical computer would fail miserably… The reason we do so well is that our brains are made up of hundreds of millions of pattern recognizers.

76% of our brain is made up by our neocortex. The neocortex has an estimated 300 million pattern recognizers which are used to determine exactly what our senses are registering. Here’s a quick example of how this network works.

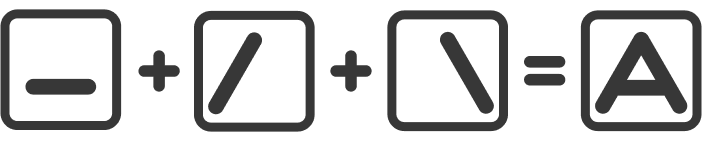

Each pattern recognizer has roughly 100 neurons, those neurons are used to determine (based on specific inputs) whether a pattern is present or not. We have multiple pattern detectors for the same concepts. For example, the letter A.

There are so many ways to represent it, yet you still understand exactly what it is. When it comes to recognizing A, we have lower level pattern detectors which combine their results to determine whether we see A.

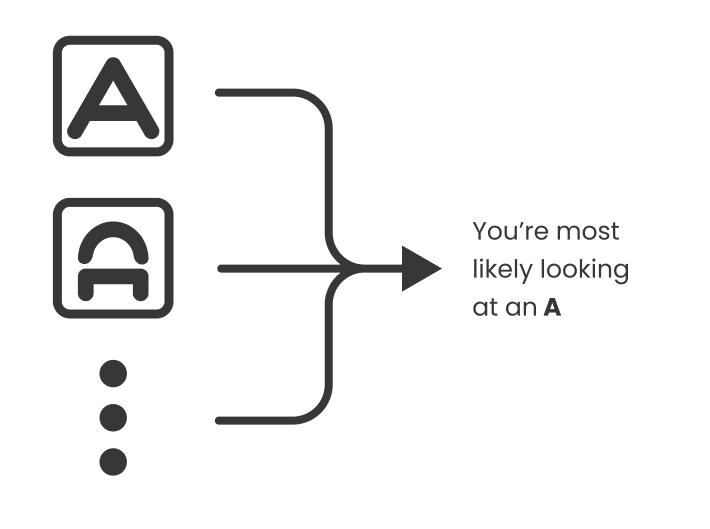

For common patterns that we see often, we have many more pattern recognizers, which makes it even faster to determine whether a pattern is actually there or not.

So when you detect something, in this example it is seeing the letter A. You have hundreds, maybe thousands of pattern recognizers firing, telling you you’re looking at an A.

The conclusion of whether you’re looking at an A or not can then be transferred further. Like into what word is that letter is in, what the sentence says, and then the idea of that sentence, how it pertains to the paragraph, and ideas that you may have.

In the case of something like an apple, we have different pattern recognizers for different senses. You could have multiple for what an apple looks like, more for how it tastes, how it feels in your hand, and even how it smells. When these pattern recognizers combine, and outweigh other conclusions, then you can determine, it is in fact an apple.

Computers still struggle to do these pattern recognition tasks. But, I can use these ideas as benchmarks for projects. So here’s a timeline on near future projects:

Finish coding my transfer learning hypothesis (proposed 4 weeks ago 😅)

Design a pattern recognizer that detects A or not A.

Evolve a pattern recognizer that detects A or not A using under 100 neurons

Design an alphabet recognizer that uses 5 pattern recognizers per letter

I tend to agree with your hypothesis from Dec 11. Processing and pattern recognition should logically progress logarithmicly rather than arithmetically.

As you suppose this is due to transference. Previous knowledge being applied to the acquisition of new.

The Tabula rosa is really only applicable in organic intelligence in the first few seconds, the slate fills quickly.

Storage capacity and thus to some extent speed of knowledge acquisition however, in organic is limited by the physical nature of the organism. Hence the decline of neuroplasticity with age In AI this issue can be overridden by simply building bigger to put it crudely.

In theory if it can recognize some patterns it should be able to recognize similar easier. The trick will be applying pattern to concept. As in the dog example. It must learn what a dog is not just that a specific pattern is a dog not a muffin. That is only a dog not "dog" .

Will this come through the recognition of more patterns, or is more required?

Well explained. Glad that you seem to be enjoying the book :)