This week I finally made presentable progress on the hypothesis I presented 6 weeks ago. To sum it up, I think that if a network is trained to complete a task, it will be capable of learning other tasks faster than a network learning it from scratch. You can read more about it here:

I got the neural networks of the NEAT algorithm up and running! Everything is almost working! The neat python library is working wonders, speciation is up, network evolution is up, fitness tracking is up, everything is up and running except 1 thing, and it happens to be quite vital.

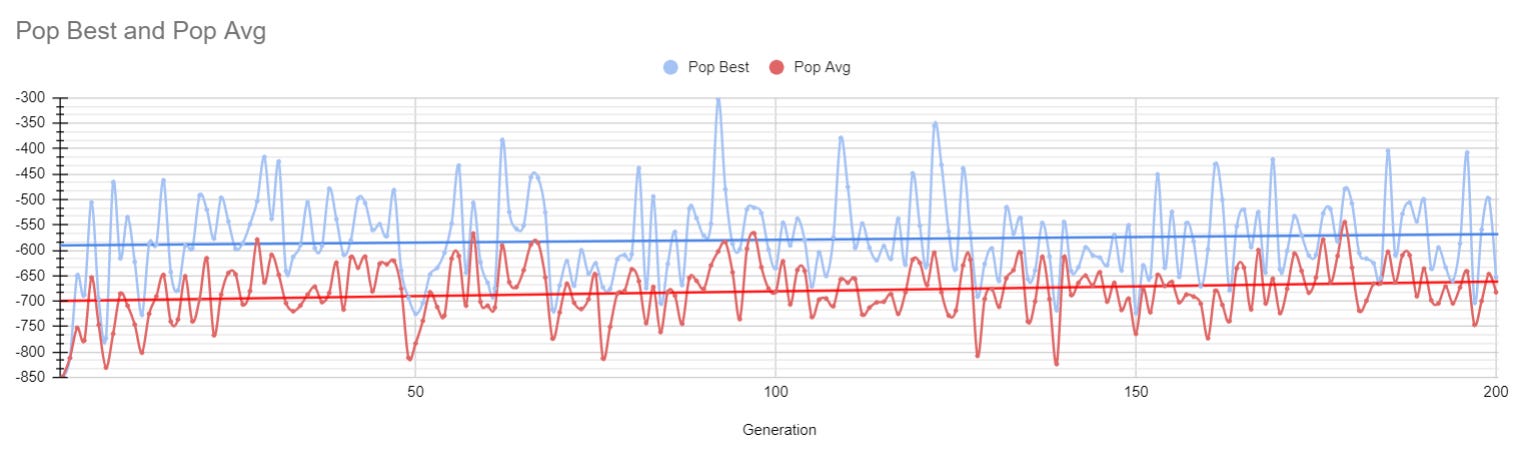

These are the results of 200 generations of evolution. In these generations there were 50 agents, each one trying to get as high up in a level. They each had the option to move left, move right, and jump. Here is a quick video with a few generations.

Now you may notice that throughout the training process, the agents’ overall score increases by about 25 points. For reference, that is the equivalent of being ~2 tiles higher. In other words, they barely learned anything…

So what is going wrong? Well for one, I have a limited knowledge base of how the pygame library works in python. Because of this limited knowledge, I opted to program a very basic vision system for the agents. By very basic, I mean really, really basic. So basic it’s bad. It would be like if your eyes would randomly rotate your vision and shift it from side to side whenever you took a step forward.

Surprise surprise, the agents did not like that 😅

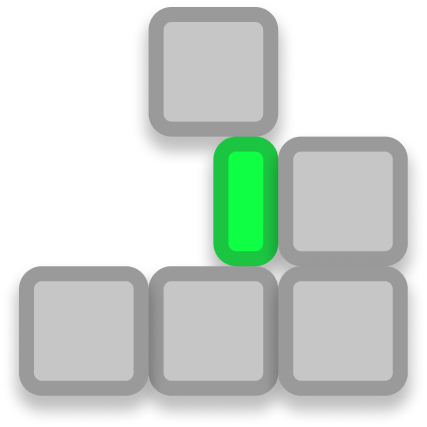

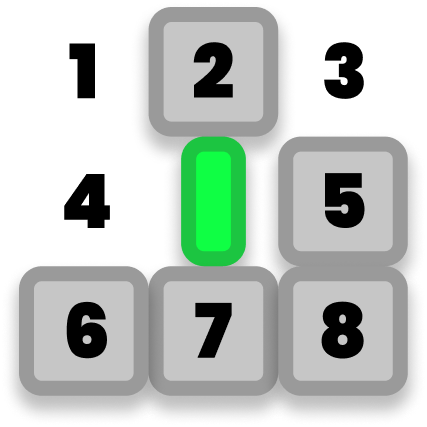

Here’s a screenshot of an agent from the video above:

I cleaned it up with some graphics to make it more easily understandable:

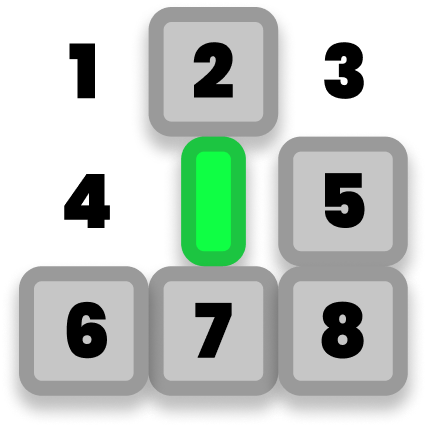

Each agent has a total of 9 inputs, meaning it has 9 variables to work from. 1 input for each of the 8 tiles around it, and 1 more which equals its current height in the level:

To get the distances to each tile, you can check if there are any tiles within 16 pixels of an agent. Let’s break down the following method:

def get_distances_to_tiles(self):

distances = []

agent_x = self.rect.center[0]

agent_y = self.rect.center[1]

for tile in self.tiles:

tile_x = tile.rect.center[0]

tile_y = tile.rect.center[1]

distance = math.sqrt( (tile_x - agent_x )**2 + (tile_y - agent_y )**2 )

if (distance < 16):

distances.append(distance + random.random() - random.random())

return distancesI start by initializing a new list of values.

distances = []Next step is to get the agent’s position into 2 different variables, I do this by accessing the agent’s bounding box, and getting the center of the bounding box.

agent_x = self.rect.center[0]

agent_y = self.rect.center[1]Next I loop through every single tile in the level, and calculate its distance compared to the agent.

for tile in self.tiles:

tile_x = tile.rect.center[0]

tile_y = tile.rect.center[1]

distance = math.sqrt( (tile_x - agent_x )**2 + (tile_y - agent_y )**2 )If the distance is less than 16 pixels, then I know it is part of the 8 tiles, and add it to a list of distances which will be used as the inputs

if (distance < 16):

distances.append(distance + random.random() - random.random())When appending the distance, there is a small random value added and removed to help prevent over-fitting.

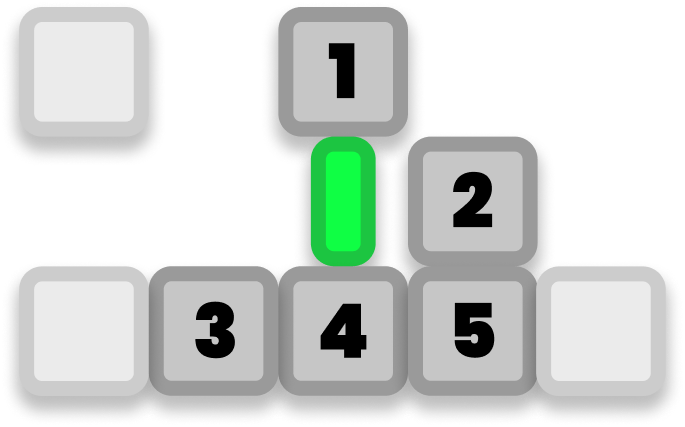

This might seem well and good, until you dig a bit deeper. Let’s go back to our image of the agent here with the 8 inputs.

In this case, since tile 4 is missing and tile 2 is present, the logical thing to do would be to move left then jump. And that is correct! Except that the way the current inputs are programmed, it ignores all empty tiles…

So the actual inputs look something more like this

Again, not too bad. We can deal with this. Let’s say the network links its input with id of 2 to moving left. So if there is something present at 2 → move left. And in the current context, that makes a lot of sense! In the agent’s current position, the tile to the right of is blocked off, so it should move left and re-evaluate. Let’s do that!

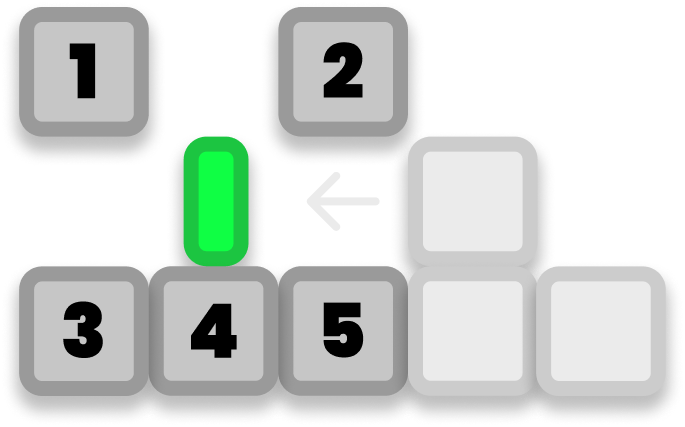

I hope this image illustrates the problem. Because the current input detection ignores empty tiles, suddenly, tile #2 is in the top right??? That messes things up for our agent. Because now it no longer makes sense for it to move left, but its algorithm still dictates it to do as such.

As I mentioned at the beginning of this post, the neural networks are working! :D

But the agents have inconsistent inputs and my pygame skills are not yet deep enough for me to come up with a solution. I will continue to explore options throughout the week, but if you know anyone who has a lot of expertise in pygame, please share this post with them! Any kind of help is greatly appreciated :D

Well that concludes this post, as I have iterated a few times, many of the systems which need to be functional are in place. I just need to give the agents consisted vision, and then I will be good to test my hypothesis.

This is very interesting. So the theory seems correct but your technical skill is not such as to optimally demonstrate?

Ok so an issue, you do need more skill on the platform, we will take that as given, but given that can you actually say the theory is correct? It appears as such and glad you state it as such, that it appears to be, for to state it is would be invalid.

The possibility is that you are entirely correct or it could be that the theory has reached its limitations despite possible greater skill.

Both are reasonable assumptions

But it will be interesting to see how it progresses.

And I do feel intrinsically that you may be correct.

interesting topic ... good luck in your journey!