Last week I claimed my hypothesis would only take 7 days to test. I was wrong. Testing my hypothesis is taking much longer than I expected.

My original hypothesis of re-using old neurons from a neural network is actually something which already exists! It’s called Transfer Learning. I am currently in the process of reading the relevant literature.

But! That’s not what this post is about.

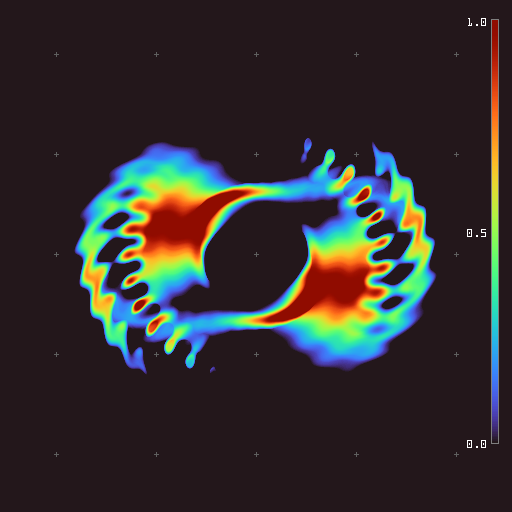

Earlier this week I had a call with Bert Chan (@BertChakovsky on twitter) the creator of Lenia. Lenia is a convolutional cellular automata which has a simple ruleset, yet complex patterns emerge. Those patterns are classified as life forms. One of the key themes of our call was the idea of emergence. Bert did not design the life-forms found in Lenia, he designed the environment which the life-forms could evolve from.

I believe humans are currently not intelligent enough to design AGI systems. Simply put, there would be too many systems and sub-systems for us to properly conceptualize. Instead of designing these systems, I think we should evolve them.

That being said, I am unsure how many systems we are actually capable of artificially evolving. We can evolve basic reasoning skills through neuro-evolution (like a Super Mario World agent) but there are many other systems necessary for AGI.

I think one key aspect of AGI will be natural language processing (NLP). Current NLP models (like ChatGPT by OpenAI) have a pre-defined Architecture. In a basic Neural Network, all of the neurons are connected to all the neurons in the next layer. In NEAT (a popular and powerful Neuro-Evolution algorithm) the network structure and connections are evolved from scratch.

That means not all neurons are connected to all neurons. Therefore if an input neuron is null, perhaps some of the hidden neurons will never be used. So simulating them and calculating them will be a waste of processing power.

In the case of a large, evolved, language model, perhaps it might have different sections for different types of text. That means when its analyzing non-sarcastic text, all the sarcasm neurons would be temporary obsolete and don’t need to be simulated. By doing a forward search from the active input neurons, to the outputs, we can lay out a path of neurons that need to be simulated. The others can be ignored. By only simulating the necessary neurons, we could save processing power.

This could also allow for complex sections of the brain to be evolved, but simply stored away until they are needed. I think through systems similar to NEAT, we could evolve much more complex behaviours.

So that is my new direction after proving that my transfer learning hypothesis works, I want to create a Neuro-Evolution based Natural Language Processing model.

To achieve this I am doing 2 things:

Learning about regular NLP models and the fundamentals of the system.

Learning about how human language was evolved.

I currently do not have much to say on either, as these are my topics of exploration next week.

How effective this will be? No clue. How I will make this? Also no clue.

I have a lot of ambiguity ahead of me, and a lot to figure out. I thought I should share these ideas even if they are only half baked, as someone out there may know something that can help me.

So if you know any good resources about NLP models that I should know about, please send them my way in the comments. If you have any feedback or thoughts on these ideas, comment them below as well. If you know someone who may be able to help me, consider sharing this post with them.

That is all for this week, a lot of theoretical, but I wanted to share what I am currently thinking about.

so cool about bert chan!!